If you’ve been in the privacy world for any amount of time, you recognize there has been a marked increase in the speed at which our world operates. New threats to our data are introduced every day. With the expanding scope of what constitutes protected and sensitive data, the number of privacy cases we must manage at any given time is increasing.

Privacy professionals are being asked to do more, and faster than ever.

A big forcing function of this acceleration came when the GDPR introduced the 72 hour timeframe for breach notification, in which the supervisory authority must be notified “without undue delay and, where feasible, not later than 72 hours after having become aware” of the breach. From a US perspective, this shift from having loosely defined notification timeframes in most cases (generally 30 or 45 days) to having hours under the GDPR signified a marked change of pace – and as regulations continue to evolve, we see this pace as a growing trend and not an anomaly.

Of course, meeting regulation-dictated notification deadlines isn’t the only reason to manage data privacy incidents as quickly and consistently as possible. The longer an incident lingers, the greater the risk to an organization. Quickly escalating incidents to the privacy team and consistently and defensibly scoring the risk of an incident to determine notification requirements is of the essence.

That’s why, for this month’s benchmarking article, I’d like to dig into what is a reasonable standard by which you can measure your incident response timeframes, to answer the question: how long does it take to assess and decide on a privacy incident?

Incident Response Timeframes and Establishing Industry Standards

Before we dive into the data, a few things to note: For the purposes of this article, we will be looking at incidents from the last 12 months (July 2018-July 2019) and specifically break down the timeframes from the date the incident occurred to the date an assessment decision has been reached, and whether to notify or not notify regulators and affected individuals as required by the applicable regulations.

It’s also important to note that Radar metadata represents organizations using purpose-built automation to manage the privacy incident response lifecycle. This means the timeframes represented below are shorter than those of privacy teams relying on manual processes. Consider also that other industry reports addressing this benchmark represent different data. For example:

- The 2019 BakerHostetler Data Security Incident Response Report showed the average time from incident occurrence to discovery to take an average of 66 days, and discovery to notification averaged 56 days.

- The 2019 Verizon Data Breach Investigations Report indicated that 56% of the breaches included in the report took months or longer to discover.

- The 2019 Cost of a Data Breach study reported the average time to identify and contain a breach was 279 days.

The above industry reports tend to focus on reported data breaches, missing the incidents that never reach the threshold to be considered a data breach requiring notification. All that to say, your mileage may vary.

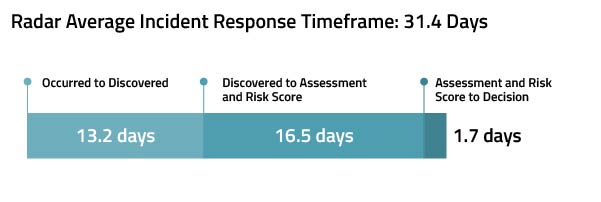

On average, incidents within Radar are risk assessed, scored, and decisioned in less than 32 days after they occur, a significant reduction over general industry practice. Let’s break that figure down further:

On average, incidents within Radar are risk assessed, scored, and decisioned in less than 32 days after they occur, a significant reduction over general industry practice. Let’s break that figure down further:

- 13.2 days from the date the incident occurred to the date it was discovered. Think of this as the time it takes for an incident to be detected and reported to the proper channels within the privacy team.

- 16.5 days from discovery to incident assessment or risk scoring. This time period represents the length of time to investigate, confirm the incident risk factors, and score the incident so the privacy and legal teams can make the decision whether or not to notify.

- 1.6 days from risk scoring to notification decision. This represents the time after the incident has been assessed and the risk has been scored in which the privacy team makes the final decision whether or not to notify regulators and/or affected individuals based on the requirements of all applicable regulations.

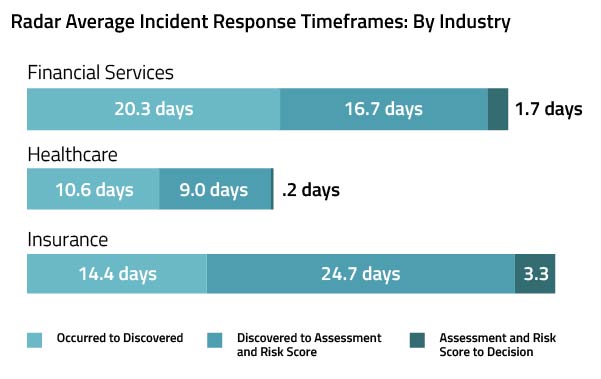

It’s interesting to note the slight variations in the incident response timeframes by industry, perhaps an indication of the industry-specific regulatory burden.

Identify Areas to Accelerate Incident Response

What should you do with this information? The purpose of any benchmarking data is to draw a line in the sand for your privacy program, to see where your organization is compared with peers, and to focus on areas that could use improvement. For example:

- Does your organization take longer to discover incidents? This could indicate an issue in training – do extra-departmental employees know what constitutes a privacy incident, and who to alert? Are there areas you could streamline incident reporting to the privacy department, and effectively “deputize” everyone within the organization as honorary privacy officers, your boots on the ground when it comes to identifying and reporting privacy incidents?

- Maybe your organization experiences a process slowdown in the investigation and assess part of the process. Does your team have the resources they need at their fingertips – like libraries of up-to-date legal requirements under the applicable jurisdictions? Maybe the investigation process lingers because the details of the incident required for a proper assessment are missing in the original report, resulting in a lot of back and forth, emails, calls, and overall inefficient communications?

- Once you have all the details of an incident, does your organization stall in making a decision to notify? Is this a result of unclear role delineation between privacy, security, and legal? Could this be due to a reliance on external resources (investigators, legal counsel)?

They say that time is money; you can spend time, you can save time – there’s a reason so many of our sayings around time relate to currency. It is by far our most precious resource, and one that you cannot earn back. And in our fast-paced world, using time wisely is critical for privacy professionals working towards compliance with data breach notification requirements. But remember – speed is just one part of the equation. Quickly identifying, escalating, assessing, scoring, and decisioning on an incident is critical to meeting breach notification timeframes. Doing this same work consistently and defensibly is critical for passing regulatory audit and proving the maturity of your privacy program.

Read more on benchmarking:

Benchmarking Data and CCPA: Data Points to the Risk of Over-Reporting Under Emerging Regulations

Faster Time to Privacy Incident Decision: How to Accelerate Breach Notification Timeframes

Benchmarking Data on the First Anniversary of the GDPR

About the data used in this series: Information extracted from Radar for purposes of statistical analysis is aggregated metadata that is not identifiable to any customer or data subject. The incident metadata available in the Radar platform is representative of organizations that use automation best practices to help them perform a consistent and objective multi-factor incident risk assessment using a methodology that incorporates incident-specific risk factors (sensitivity of the data involved and severity of the incident) to determine if the incident is a data breach requiring notification to individuals and/or regulatory bodies. Radar ensures that the incident metadata we analyze is in compliance with the Radar privacy statement, terms of use, and customer agreements.